If you are building anything serious on Supabase, even if it is a small side project, a client portal, an internal tool, or a simple product you are testing, having a proper database backup process matters far more than most people realise at the start. It is easy to assume that because Supabase is modern, managed, and developer friendly, your data is always safe and there is nothing to worry about, but that mindset can become expensive the moment something goes wrong. A table can be deleted by mistake, records can be changed during testing, a migration can fail, or a project can simply move in a direction where you need a snapshot of what existed before. This is why learning how to create a backup manually using a reliable desktop database tool like DBeaver is such a practical skill, especially if you want a simple method that you can repeat whenever needed.

What makes this approach especially useful is that DBeaver is open source, widely used, and capable of connecting to many different database systems from one interface, which makes it a very sensible tool if you are already working across multiple platforms. In this case, the goal is straightforward. You want to connect DBeaver to your Supabase PostgreSQL database, confirm the connection works correctly, and then run a backup from inside DBeaver so you end up with a file saved on your computer. It is not a flashy workflow, but it is one of those practical processes that gives you a lot of peace of mind once it is set up and tested properly.

The good thing about this method is that it does not require anything especially advanced. You do not need to live in the command line, and you do not need to build a complicated automation system just to create your first backup. If you are comfortable clicking through a database settings panel and entering connection details into a desktop app, you can get this working. That simplicity fits well with the kind of practical workflow many solo founders, makers, and small business operators need. Sometimes the best system is not the most complex one. Sometimes it is the one you can understand quickly, test immediately, and trust enough to use consistently.

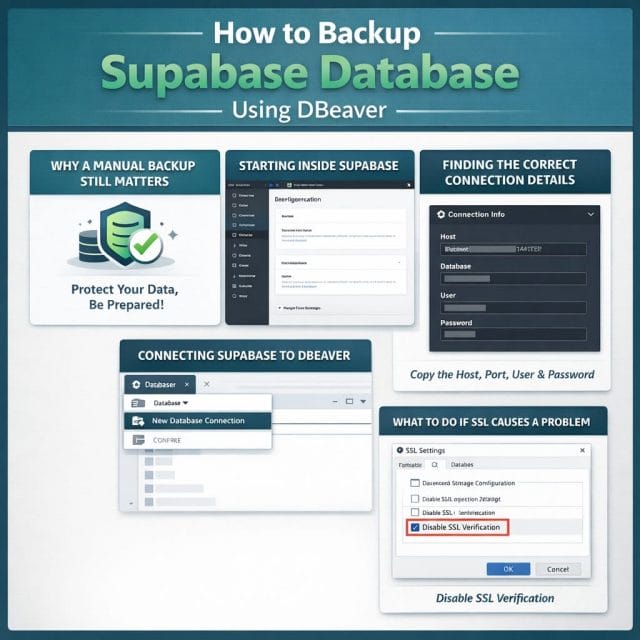

WHY A MANUAL BACKUP STILL MATTERS

There is a tendency in modern software to lean heavily on platforms and assume they will cover every operational need by default, but backups are one of those areas where personal responsibility still matters. Managed services are excellent, and Supabase provides a very capable environment, but your own working habits are still part of your risk management. If you are changing schema, testing imports, editing production data, or experimenting with new features, creating a point in time backup before major work is simply a smart move. It gives you a fallback option and helps reduce the pressure that often comes with making live changes.

For solo entrepreneurs and developers, this becomes even more important because there is usually no separate operations team watching over your shoulder. You are often the person building the feature, testing the fix, updating the records, and troubleshooting the side effects. In that kind of environment, a backup is not just a technical precaution. It is a business protection habit. If your application supports customers, stores lead data, holds orders, or tracks anything valuable to your workflow, then a backup can save hours, days, or even weeks of painful recovery work later on.

Another advantage of doing a manual backup yourself is that it forces you to understand how your database is actually connected. You learn where the host details are, which username is being used, what authentication method applies, and how tools like DBeaver communicate with Supabase. That knowledge is useful beyond this one task. It helps with troubleshooting, migrations, reporting, and future maintenance. A lot of seemingly advanced technical work starts becoming much easier once you are no longer intimidated by the connection settings and structure of your own database environment.

STARTING INSIDE SUPABASE

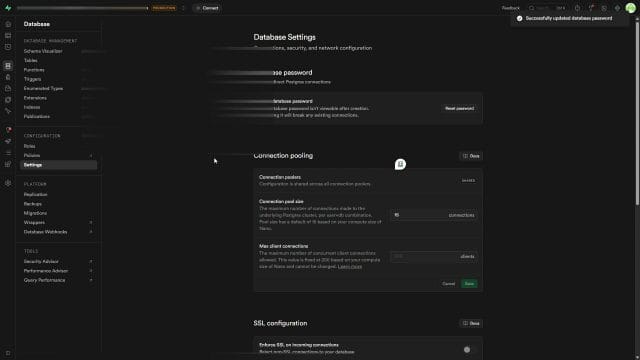

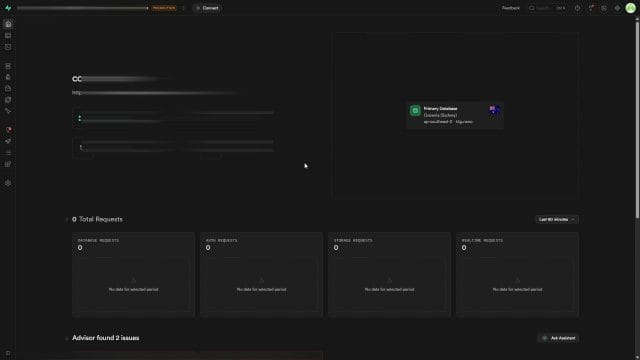

The process begins in your Supabase project dashboard, and the first thing to check is whether your database has a password set that you can use externally in DBeaver. This step is easy to overlook, especially if you have mostly been working inside the Supabase interface and have not connected with a desktop database client before. Without the right password, DBeaver will not be able to authenticate your connection, so this is the foundation of the whole process.

Inside Supabase, go to your database area, then head into the settings section. From there, you will see the option to reset the database password. If you have already configured this in the past and you know the password, you may not need to change anything. If this is your first time, though, generating a new password is the easiest route. Supabase gives you an option to generate a password automatically, which is convenient because it ensures you get something secure rather than relying on something simple and memorable that may not be ideal from a security point of view.

Once the generated password appears, copy it and store it somewhere safe. This part deserves more attention than people often give it. If you just copy the password casually and then lose it, you may end up repeating the reset process and creating confusion for yourself later, especially if you use that same database in other tools or environments. A password manager is the most sensible place for it, but at minimum make sure you can retrieve it when needed. After copying it, complete the reset so the password becomes active, because this is the credential DBeaver will use when it attempts to connect.

This step may seem small, but it is really the gatekeeper to the rest of the workflow. If your password is not properly set or copied, the later connection process will fail and it can become frustrating because everything else may appear to be correct. When people say a tool does not work, it is often not the tool itself but one incorrect detail in the authentication process. Starting cleanly here avoids that problem.

FINDING THE CORRECT CONNECTION DETAILS

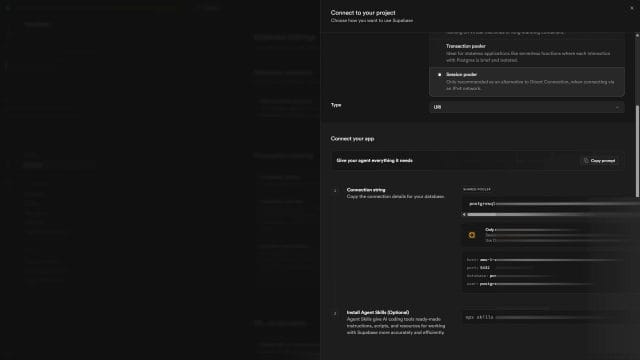

Once the password side is sorted, the next job is to identify the exact database connection settings that DBeaver needs. Supabase gives you these details inside the project, and using the correct set is important because different connection modes can exist. In the workflow shown here, going into the connect section and then selecting the direct option reveals the details you need. In particular, the session pooler setup appears to work well for this kind of connection, and it provides the host, database name, username, and the surrounding information required to match DBeaver’s fields.

What you are essentially doing here is translating the Supabase connection configuration into DBeaver’s connection form. The host field in DBeaver needs to match the host shown in Supabase. The database field should match the database name. The username should match the supplied username. Then the password field should contain the database password you generated or already had available. Most of the effort here is not technical complexity but careful copying. Small mistakes such as extra spaces, missing characters, or selecting the wrong connection type can stop the process even when everything else is correct.

It is worth slowing down and treating this as a precision task rather than a rushed one. Many connection issues come from trying to move too quickly. A host name copied incorrectly by one character can waste ten minutes of troubleshooting. A password pasted with a hidden space can look valid while still failing. A wrong database name can make it seem like the service is unavailable. If you are methodical here, the rest of the setup becomes very smooth.

There is also a broader lesson in this. Tools like DBeaver are not mysterious once you understand that they simply need the same connection information any database client would require. The interface may look technical, but the logic is straightforward. You are telling the client where the database lives, which database to open, who you are, and how to verify your access. Once you think of it that way, it becomes less intimidating and more like filling in a secure login form for a more advanced system.

CONNECTING SUPABASE TO DBEAVER

With the details ready, open DBeaver and create a new connection. The interface is quite friendly for this kind of task. Click the plus icon to add a new database connection, then choose PostgreSQL from the list, because Supabase runs on PostgreSQL. After selecting it, move to the next step where DBeaver presents the connection form. This is where the information from Supabase gets entered into the matching fields.

The host goes into the host field. The database name goes into the database field. The username goes into the username field. The password you generated earlier goes into the password field. In many cases, the main pieces you really need to copy directly are the host and the username, while the database name may often be obvious from the provided settings, but it is still best to verify everything rather than assume. Once all fields are complete, click finish and let DBeaver attempt the connection.

If everything has been entered correctly, the connection should succeed and your Supabase database will appear in DBeaver’s database navigator. That moment is useful because it confirms two things at once. First, your credentials are valid. Second, DBeaver can communicate with the Supabase database environment from your machine. Once you have reached that point, the hard part is mostly done. The remaining work is just using the built in backup tool correctly.

There is a reassuring simplicity to this stage. You are not installing obscure drivers manually or wrestling with a complex enterprise setup. You are just using a mature database client to log into a PostgreSQL instance hosted by Supabase. For a lot of people, that first successful connection removes a lot of uncertainty. It turns the idea of remote database access from something abstract into something practical and manageable.

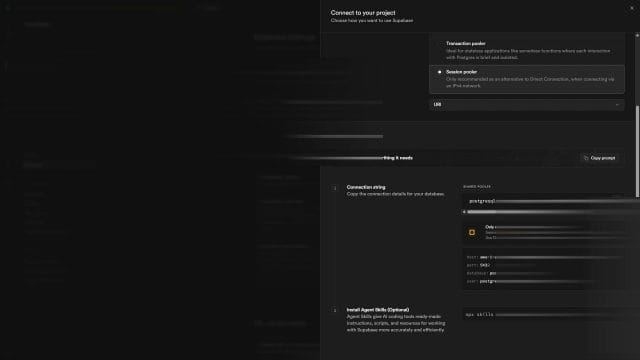

WHAT TO DO IF SSL CAUSES A PROBLEM

One useful note in this workflow is the possibility of an SSL complaint during the connection attempt. Sometimes a database client can raise an SSL related issue depending on the environment, connection route, or expected certificate settings. If that happens, DBeaver includes an SSL section in the connection configuration where you may need to define the appropriate certificate or adjust the SSL behaviour. In the example process here, that extra step was not necessary, which is obviously the ideal outcome, but it is still worth knowing that the option exists in case your setup behaves differently.

The practical mindset here is not to panic if the first connection attempt throws an SSL message. It does not automatically mean the whole method is broken. It usually means the secure connection settings need a closer look. If your host, username, and password are correct, and the only obstacle appears to be SSL, then you are likely quite close to a working connection. DBeaver gives you a place to handle that, and Supabase’s connection documentation can help if you need exact certificate requirements.

This is one of those moments where patience beats frustration. Too many people abandon a workable process the moment they see one technical warning, but most of the time it is just one configuration step away from success. If the connection works without any SSL adjustment, great. If not, you know where to investigate next rather than assuming the entire setup is impossible.

CHECKING THE DATABASE STRUCTURE BEFORE BACKING UP

After the connection is established, take a moment to expand the database in DBeaver and confirm you can actually browse the structure. This is not a mandatory step in a strict sense, but it is a very sensible one. If you can see the PostgreSQL database, schemas, and tables, you have visual confirmation that DBeaver is connected correctly and that your access level is sufficient for the tasks ahead. It also helps you build confidence in the tool if this is your first time using it with Supabase.

Browsing the structure briefly can also help you notice whether you are connected to the database you expected. This matters more than people think, especially if you work across development, staging, and production environments. A backup created from the wrong environment can lead to confusion later when you try to restore or inspect it. Spending a few seconds verifying the visible tables and schema names is a simple way to avoid that mistake.

Another reason this quick check is useful is that it reinforces the habit of understanding your data before performing operations on it. If you know what is in the database, what tables matter most, and how your application is structured, then the backup process becomes part of a larger disciplined workflow rather than just a random export action. Good operational habits are usually built from these small pauses and checks, not from rushing through every admin task as fast as possible.

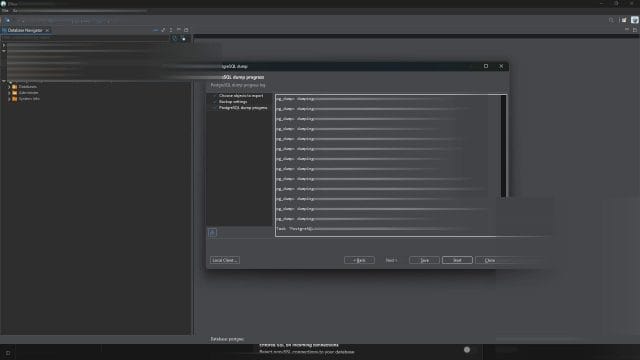

RUNNING THE BACKUP IN DBEAVER

Once you have confirmed the connection and can see the database, the actual backup process inside DBeaver is quite direct. In the database navigator, select the PostgreSQL database, then go to the tools menu and choose the backup option. This opens the backup workflow and lets you define what should be included. In the example process, selecting all is the approach used, which makes sense when the aim is to capture the full database content rather than only certain parts.

At this point, DBeaver will ask for output details such as the file name and folder location. This may seem like a purely administrative step, but it is worth handling properly. Give the backup file a clear, descriptive name so that when you look at it later, you know exactly what it is. A good naming convention can include the project name, environment, and date. Even if you are only creating occasional backups now, this becomes more useful over time as multiple files accumulate.

The destination folder matters as well. Save the file somewhere organised, not just to a random downloads folder where it may be forgotten or accidentally deleted. If you are serious about your project, create a dedicated backup directory structure on your machine or in a secure synced storage location. The point of creating a backup is not simply to generate a file. It is to create a recoverable asset you can actually find and trust later.

After setting the name and folder, click start and DBeaver begins the dump process. This is where the tool goes through the database objects and exports the relevant data into the backup file. Depending on the size of your database, this may finish very quickly or take longer, but in a typical smaller project it is often done without much waiting. Once completed, DBeaver should indicate that the process has finished successfully, which is your signal to move on to the final but important check.

What I like about this workflow is that it feels practical rather than abstract. You can see the database, launch the backup, choose where the file goes, and then verify the result. There is something reassuring about watching a straightforward process complete in front of you, especially when compared with setups where everything happens somewhere invisible and you only hope it worked. For many business owners and creators, visible processes are easier to trust because they are easier to confirm.

WHY THE FILE CHECK AFTERWARDS REALLY MATTERS

Once DBeaver reports that the backup is complete, do not stop there. Go to the folder where you told DBeaver to save the file and make sure the backup actually exists. This final verification is one of the simplest but most valuable habits in the whole process. A task is not truly finished just because a progress bar completed. It is finished when the expected output is present, named correctly, and stored where you can access it.

It is surprising how often people assume success without checking the result. Maybe the file was saved to a different folder than expected. Maybe there was a naming mismatch. Maybe permissions or local storage issues affected the output. These things are not common enough to cause constant fear, but they are common enough that a quick manual check is worth doing every time, especially until this workflow becomes second nature to you.

When you open the folder and see the backup file sitting there, that is the real completion point. At that moment, you have moved from intention to actual protection. You now have a stored copy created outside the live environment, and that gives you options if anything changes unexpectedly later. That is the kind of small, practical win that often gets overlooked in fast moving development, but it is exactly the sort of habit that makes a solo operator more resilient over time.

From here, the next level of maturity is not just backing up once, but thinking about frequency, storage discipline, and restoration confidence. A backup file only becomes truly useful when you know it exists, know where it is, and trust that it was created properly. That is why the file check is not an afterthought. It is part of the backup itself.

HOW TO CHECK WHETHER YOUR SUPABASE BACKUP IS ACTUALLY USEFUL

Once the backup file has been created and you can see it sitting in your chosen folder, the next step is the one many people skip, and it is probably the most important one in the whole process. A backup only becomes valuable when you know it can be trusted, located quickly, and used again when something goes wrong. It is very easy to feel relieved after clicking the backup option in DBeaver and seeing a success message, but in practice that is only the beginning of a safer workflow rather than the end of it.

If you are running a small app, a client project, or an internal business tool on Supabase, then the real question is not whether you created a backup file once. The better question is whether you could restore from it calmly and confidently on a stressful day when a migration failed, some important records were deleted, or a test change damaged production data. That is why it makes sense to spend a few extra minutes checking the file properly, understanding what type of backup it is, and organising it in a way that still makes sense weeks or months later.

A practical first check is simply to review the file name, file size, and save location. If the file name is vague, for example something generic that does not clearly identify the project or environment, it becomes much harder to use later. A file called backup.sql might feel fine today, but if you have several projects or multiple environments such as local, staging, and production, that naming approach becomes messy very quickly. A much safer pattern is something like projectname production 2026 04 22.sql because it tells you what it is without forcing you to open it first.

- Include the project name

- Include the environment name

- Include the date in a clear order

- Keep the format consistent every time

- Store backups in a dedicated folder instead of random desktop locations

Another useful check is to confirm the file is not suspiciously tiny. The exact size will vary depending on your database, but if you expected a meaningful amount of data and the backup file is only a few kilobytes, that should make you pause and investigate. In some cases that may still be normal if your database is almost empty, but if you know you have tables full of user data, orders, logs, or content records, then a very small output file may be a sign that something was not included or that you backed up the wrong target.

At this stage, I also think it is worth opening the file if the backup was generated in a readable SQL format. You do not need to inspect every line, but scrolling through it gives you a very fast sense of whether it looks legitimate. You should normally see database statements, schema references, table related content, and other recognisable PostgreSQL backup material rather than an empty looking file or a file that appears corrupted. This is a very simple confidence check, but it can save time later.

What makes this especially important with Supabase is that many people assume the managed platform removes the need to think about backup quality. In reality, Supabase makes hosting easier, but your responsibility for operational safety does not disappear. If your app matters to your business, or even if it is just a side project you have invested serious time into, then you want a repeatable habit that gives you confidence rather than a vague feeling that things are probably okay.

TRY RESTORING TO A SAFE TEST DATABASE BEFORE YOU NEED IT

The best way to prove a backup works is to restore it somewhere safe. This does not mean you should experiment on your live Supabase database, and in fact that is exactly what you should avoid. The smarter approach is to create a disposable test database, a local PostgreSQL instance, or another non production environment where you can practise the restore process without any risk to real users or live data. This is the point where your backup habit becomes much more mature, because you stop treating backups like a box ticking task and start treating them as recoverable assets.

If you already have PostgreSQL running locally, that is often the easiest place to test. You can create a temporary database, connect to it through DBeaver, and then use the restore tools to import the backup file you created. The exact restore flow can vary slightly depending on the backup format and your DBeaver setup, but the principle stays the same. You are checking whether the schemas, tables, and ideally the data itself can be recreated in a usable state.

When doing this for the first time, expect it to feel a little slower than the backup process. That is normal. Restoring introduces more opportunities for version mismatches, permissions issues, or object conflicts if the target database is not truly empty. That is another reason why a fresh test database is a good idea. You want the cleanest possible environment so the restore result tells you something meaningful.

After the restore finishes, inspect the structure in DBeaver just as carefully as you did with the live Supabase connection earlier. Expand the schemas, open a few tables, and verify row counts where possible. If there are specific tables that matter most to your application, such as users, products, orders, settings, or content tables, those are the ones worth checking first. The goal here is not to perform a forensic audit of every record. The goal is to confirm that the backup is not merely present, but practically restorable.

- Create a test database first

- Use DBeaver restore tools rather than guessing

- Restore into a non production environment only

- Check schemas and key tables after the restore

- Keep notes on any restore issues you encounter

This restore test also reveals something else that is easy to overlook. It shows you how long recovery may actually take. For a tiny project, recovery might be quick and straightforward. For a larger database, the process may take longer than expected, especially if you need to coordinate it carefully. That timing matters because backup strategy is not only about whether a copy exists. It is also about how quickly you can recover when the pressure is real.

If you find errors during the restore, that is not a reason to give up on DBeaver or on manual backups. It is actually a valuable discovery. Finding restore friction during a calm test is far better than finding it during an emergency. You can then adjust your process, change export options, review formats, or refine your environment documentation until the workflow becomes repeatable. In practical terms, that is exactly what a good backup routine should do for you.

BUILD A SIMPLE BACKUP SCHEDULE YOU WILL ACTUALLY FOLLOW

One of the easiest mistakes is to do one successful backup and then forget about it for months. That usually happens because the process feels manual, slightly technical, and easy to postpone. The fix is not necessarily to jump straight into heavy automation. Sometimes the better move is to create a simple schedule that matches the reality of your project. If your app changes often, a monthly backup may be too relaxed. If your database rarely changes, a daily manual backup may be excessive. What matters is choosing a rhythm you can realistically maintain.

For a solo founder or small team, a sensible starting point might be to back up before any major change and also on a regular timetable. For example, before schema migrations, before bulk imports, before deploying important features, and then perhaps weekly or fortnightly depending on the pace of change. This approach protects you both from planned risk and from the slow drift of everyday data changes that build up over time.

A practical way to think about it is to tie backups to business events rather than just calendar dates. If you are launching a new feature, changing billing logic, editing user roles, or restructuring content tables, that is a natural trigger for a new backup. If you are running an ecommerce or booking style application, then any period of increased activity also makes backups more important because the cost of losing recent data becomes higher.

To make the schedule easier to follow, keep it visible. A recurring calendar reminder works surprisingly well, especially when the process only takes a few minutes once you know your connection is working. You can also keep a small operations checklist in your project documentation that includes backup creation, verification, and storage. This sounds basic, but basic systems are often the ones that actually get used consistently.

It also helps to decide what you want to keep. Not every backup has to be stored forever. You might keep daily backups for a short period, weekly backups for a longer period, and milestone backups around major releases for even longer. The point is to avoid a chaotic folder full of files with no retention logic. Storage is cheap compared with business downtime, but organisation still matters because clutter reduces confidence.

WHERE TO STORE YOUR BACKUP FILES MORE SAFELY

Saving your backup to your local machine is a perfectly reasonable first step, but it should not be the only place it lives. If your laptop fails, is lost, or becomes corrupted, then a backup stored only on that same device is obviously less helpful. A stronger approach is to keep at least one additional copy somewhere separate, ideally in a secure cloud storage location or an external drive that is managed properly. This is not about creating a huge enterprise system. It is simply about avoiding a single point of failure.

For personal projects and small businesses, a practical setup might be to store the original export in a dedicated local folder and then copy it to a secure cloud storage service that you already trust for business files. The exact service matters less than the discipline of using one consistently and protecting access to it properly. If the backup contains sensitive or personal data, which many database backups do, then you should think carefully about encryption, access control, and who can download those files.

It is worth remembering that a Supabase database backup may contain far more than harmless test content. Depending on your app, it could include customer information, internal records, application settings, or commercially sensitive data. That means backup handling is not just a technical matter. It becomes a security and privacy responsibility too. Even if you are a one person operation, you still need to act like a responsible custodian of that data.

- Keep one local copy for quick access

- Keep one separate copy off the machine

- Restrict who can access backup folders

- Use clear folder structures by project and environment

- Review old backups and remove what you no longer need

If you work across multiple devices, it is especially useful to standardise your folder names and backup locations. This reduces friction when you need to find a file quickly. A structure such as project name, then environment, then year and month folders can work well because it remains readable without opening anything. The more obvious you make the structure, the more useful it becomes under pressure.

There is also a mindset shift here that I think matters. Backups should not feel like digital leftovers pushed into random folders. They are part of your operating system as a founder, developer, or site owner. When treated that way, it becomes much easier to maintain standards around storage, naming, checking, and restoration.

COMMON PROBLEMS YOU MIGHT RUN INTO WITH DBEAVER AND SUPABASE

Even with a straightforward workflow, there are still a few common issues that can trip people up. None of these are unusual, and most can be solved with a bit of patience and careful checking. The key is to avoid changing five things at once. When something fails, slow down and verify each connection detail, each setting, and each assumption one by one.

The first common problem is simply using the wrong connection details. This often happens when copying the host, database, username, or password from Supabase and accidentally including extra spaces, outdated credentials, or the wrong endpoint. If DBeaver refuses the connection, go back to the Supabase dashboard and compare every field carefully rather than guessing.

Another issue is confusion between environments. Some people back up a database successfully but later realise they were connected to a development or test environment rather than production. That is one reason the earlier verification step matters so much. Before exporting anything, take a moment to inspect the schemas and tables and make sure the data looks like the environment you intended to back up.

SSL and security settings can also cause friction depending on your setup. If you saw SSL issues earlier when establishing the connection, similar care may be needed during follow up work. Usually this is not a sign that the whole method is wrong. It just means the database expects a specific secure connection behaviour and DBeaver needs to match it correctly.

A less obvious issue is saving the backup somewhere forgettable. Technically the export may have succeeded, but if the file is buried in a temporary downloads folder or mixed in with unrelated files, you are creating future stress for yourself. This is not a software failure, but it is still an operational failure. Reliable backups are about both successful export and successful retrieval.

There can also be cases where the backup completes but the resulting file does not contain what you expected. If that happens, check the export options you selected, confirm you chose the correct database objects, and consider running a small restore test. That restore test often reveals problems more clearly than staring at the file itself. In other words, when in doubt, prove the backup by using it somewhere safe.

QUICK TROUBLESHOOTING CHECKLIST

- Recheck the Supabase host, database name, username, and password

- Make sure you are connecting to the intended environment

- Review SSL related settings if connection errors appear

- Confirm the backup file exists in the folder you expected

- Check the file size for anything obviously unusual

- Open the file if it is readable and inspect it briefly

- Test a restore to a safe database if you want stronger confidence

Most of the time, the solution is not especially dramatic. It is usually about accuracy, patience, and having a methodical routine. That is good news because it means the process is learnable without needing deep database expertise.

WHEN MANUAL BACKUPS ARE ENOUGH AND WHEN TO LEVEL UP

For many people, manual backups through DBeaver are a very sensible starting point. They are easy to understand, relatively accessible, and they help you become more familiar with your Supabase database. If you are running a side project, an early stage product, a low change internal tool, or a personal application with manageable data volume, this workflow can be completely appropriate as long as you do it consistently and verify the results.

However, there comes a point where manual backup alone may stop being enough. If your application is growing, if the data changes many times a day, if revenue depends on it, or if multiple people are making schema changes, then you may want more than a manual habit. At that stage, you would typically start thinking about a broader backup and recovery strategy that includes automation, retention rules, documented recovery steps, and perhaps staged environments specifically designed for safer testing.

That does not mean the manual process becomes useless. In fact, it often remains a valuable layer even when you have more advanced systems in place. Manual backups can be excellent before risky changes, during migrations, before experimenting with table structures, or whenever you want a human verified snapshot. The value of DBeaver here is that it gives you a direct, understandable tool for taking control without needing to drop immediately into command line workflows.

I think this is especially relevant for the kind of person building practical digital projects solo or in a very small team. You do not always need the most complex setup first. What you do need is a workflow that is clear, repeatable, and trusted. If DBeaver helps you build that habit, then it is already doing an important job.

A BETTER WAY TO THINK ABOUT BACKUPS AS PART OF RUNNING YOUR PROJECT

By this point, the bigger lesson is not only how to click through DBeaver and generate a file from your Supabase database. It is that backups are part of how you run your project responsibly. They sit alongside updates, testing, documentation, and access control as one of those quiet habits that rarely feel urgent until the day they suddenly become the most important thing in the room. When that day comes, the people who benefit are usually the ones who kept the process simple enough to maintain and strict enough to trust.

So if you are using Supabase and want a practical way to protect your data without overcomplicating things, DBeaver gives you a solid path. Connect carefully, verify the correct database, export with a clear naming convention, store the file properly, and when possible, test a restore somewhere safe. That combination turns a basic backup into something genuinely useful. It also gives you more confidence in your own systems, which is valuable whether you are building client work, an internal tool, or the next version of your own product.

The real win is not having a folder full of backup files. The real win is knowing that if something breaks, you already have a calm, proven process to recover from it, and that kind of confidence is one of the most practical advantages you can give yourself when you are building online.

If this article helped you in any way and you want to show your appreciation, I am more than happy to receive donations through PayPal. This will help me maintain and improve this website so I can help more people out there. Thank you for your help.

HELP OTHERS AND SHARE THIS ARTICLE

LEAVE A COMMENT

I am an entrepreneur based in Sydney Australia. I was born in Vietnam, grew up in Italy and currently residing in Australia. I started my first business venture Advertise Me from a random idea and have never looked back since. My passion is in the digital space, affiliate marketing, fitness and I launched several digital products. You will find these on the portfolio page.

I’ve decided to change from a Vegetarian to a Vegan diet and started a website called Veggie Meals.

I started this blog so I could leave a digital footprint of my random thoughts, ideas and life in general.

If any of the articles helped you in any way, please donate. Thank you for your help.

Affiliate Compensated: there are some articles with links to products or services that I may receive a commission.